Executive Summary

- Untangling the Terms: A Semantic Model is a broad term for a model that describes data, often in a descriptive, application-specific way. An Ontology is a formal, prescriptive contract of meaning that is machine-interpretable and independent of any single system. A Knowledge Graph is the result of populating an ontology with data , it is the web of facts connected according to the ontology’s rules.

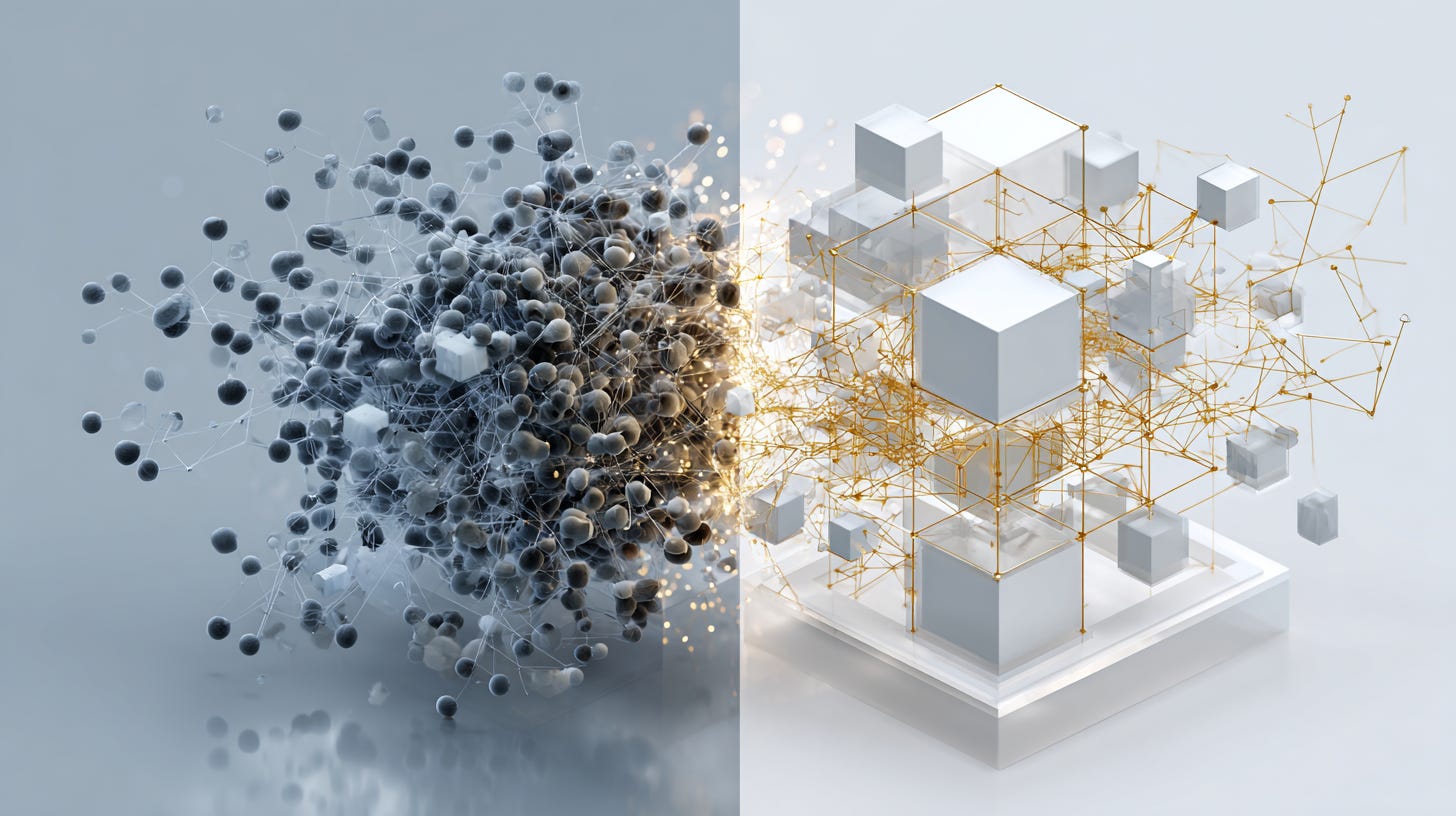

- The Core Distinction: Intentionality vs. Emergence: The critical difference is design. Ontologies are built with intentionality: a top-down, explicit blueprint for what your business concepts mean and how they relate. Many “semantic models,” especially in Labeled Property Graphs (LPGs) or data catalogs, are emergent their meaning is inferred bottom-up from the data that happens to exist, making it brittle and inconsistent.

- Why It’s an Architectural Divide: This isn’t just terminology. For enterprise AI, it’s the difference between reliable reasoning and sophisticated guessing. Formal ontologies provide the stable semantic backbone required to prevent “linkage hallucination,” enable true explainability, and build AI systems that can reliably navigate the complexity of a real-world enterprise.

Introduction

“Semantic model,” “ontology,” and “knowledge graph” are terms now used so broadly they risk losing their meaning. Every data platform, BI tool, and catalog vendor now promises a “semantic layer.” But beneath the marketing, a fundamental architectural divide separates systems that merely describe data from those that formally encode its meaning.

For simple, single-domain questions, a descriptive model might suffice. But as soon as you need to ask complex, cross-functional questions, the kind that drive real business value, the ambiguity of emergent, informal semantics leads to failure. AI agents deliver inconsistent results, analytics remain siloed, and explainability is lost. This is because most “semantic layers” are not built on a foundation of formal, machine-interpretable meaning. They are built on suggestion and correlation.

A true enterprise-grade knowledge platform starts with meaning, not just metadata. It relies on a formal ontology to serve as an intentional, stable contract for what data means, providing the only reliable foundation for scalable reasoning and trustworthy AI.

The Core Distinction: Intentionality vs. Emergence

To understand the difference, consider two ways of building a structure. You can follow a detailed architectural blueprint, or you can assemble a shelter from materials you find nearby. Both may provide cover, but only one is engineered to be stable, scalable, and predictable.

This is the difference between an ontology and most other semantic models.

- Ontologies are Intentional: A formal ontology, expressed in standards like RDF, OWL, and SHACL, is the blueprint. It is designed top-down with the explicit intent to model a domain’s reality. It defines what a

CustomerorContractis, what properties they can have, and how they are allowed to relate, independent of any specific database schema. This is a prescriptive architecture of meaning. - Other Semantic Models are Often Emergent: The schema of a Labeled Property Graph (LPG) or the concepts in a data catalog often emerge from the data itself. Labels like “Employee” or “Resource” are applied to nodes, and relationships are drawn based on observed connections. This bottom-up approach is flexible for local, specific tasks but becomes a liability at enterprise scale. Without a governing blueprint, semantic drift is inevitable; one team’s “Customer” is another’s “Client,” and an AI agent has no formal way to know they are the same.

This is the core of the issue: are you building on a foundation of semantics-by-design or semantics-by-default?

Definitions and Model: A Spectrum of Meaning

Not all knowledge organization is the same. There is a spectrum of formality, and understanding it clarifies the unique role of an ontology.

- Lexicons and Thesauri: These define terms and link synonyms (“Debitor” is related to “Customer”). They provide a shared vocabulary but lack structural depth.

- Taxonomies: These introduce a single hierarchical relationship: containment (

is a). For example, aTruckis aVehicle. This is useful for classification but cannot capture the rich, multi-dimensional relationships of a real business. - Semantic Models (The Broad, Ambiguous Category): This term often refers to descriptive models found in BI tools, data catalogs, or LPGs. A data catalog, for instance, provides a rich inventory of data assets, their owners, and their lineage. It can link related concepts and even enforce documentation rules. However, this meaning is for human interpretation within the tool; it is not a formal, computable, and vendor-independent schema for an AI to reason over. An LPG schema is similarly informal, describing the data that exists rather than prescribing the rules for what it can mean.

- Ontologies: An ontology provides the formal, expressive power that others lack. It uses classes (concepts), data properties (attributes), and object properties (relationships) to create a machine-interpretable contract of meaning. Crucially, it defines how entities can relate across different perspectives (“worksIn,” “owns,” “is upstreamOf”). It is the only level that provides a robust, explicit, and stable semantic backbone.

- Knowledge Graph: A knowledge graph is simply an ontology instantiated with data. It is the network of your actual customers, products, and orders, all connected according to the formal rules defined in your ontology.

Why This Matters for Enterprise AI

This distinction is critical for building AI that you can trust. An AI agent operating on an emergent model must constantly guess, whereas an agent grounded in an ontology reasons over explicit knowledge.

From Text-RAG to Schema-RAG

Most “chat with your data” systems use Retrieval-Augmented Generation (RAG) on documentation or table metadata. This is brittle. Our approach enables Schema-RAG, where the AI agent first retrieves knowledge from the ontology itself. It explores the classes, properties, and formal relationships to understand the conceptual neighborhood of a question before it attempts to query the data. This dramatically improves accuracy and relevance.

Preventing Linkage Hallucination

The most dangerous AI errors in an enterprise context aren’t wrong facts, but wrong connections. When an LLM without an ontology is asked to join data from a CRM and an ERP, it has to guess if crm.cust_id and erp.customer_num represent the same thing. It might get it right, but it might also "hallucinate the reasoning path," leading to a plausible-sounding but deeply incorrect answer. An ontology makes this relationship explicit, removing the need for guesswork.

True Explainability and Governance

Because every query path is validated against the formal rules of the ontology, the reasoning is always inspectable. You can trace exactly which concepts were matched and which relationships were traversed to arrive at an answer. This provides the auditable, deterministic foundation required for enterprise governance.

Trade-Offs and Limits

Of course, there are trade-offs. Designing a formal ontology requires upfront intellectual rigor and cross-departmental consensus. It is more difficult than letting a schema emerge organically. For small, isolated projects where speed is paramount and long-term consistency is not, the informal flexibility of an LPG can be practical.

However, that short-term agility comes at the cost of long-term technical debt and semantic chaos. For any organization serious about building a scalable, reliable, and interconnected data landscape for AI, the upfront investment in formal semantics is not just worthwhile, it is essential.

Conclusion

If you remember one thing, let it be this: an ontology is a prescriptive architecture of meaning, while most other semantic models are descriptive snapshots of data.

Labeled Property Graphs and data catalogs are valuable for implementation and discovery, but they cannot replace the architectural clarity that a formal ontology provides. They describe what data you have; an ontology defines what your data means.

For enterprises building the next generation of AI systems, this clarity is the new agility. The most successful data architectures will be those that embrace this principle: agility at the data layer, stability at the semantic layer. By grounding your systems in a formal ontology, you turn fragmented data into structured knowledge, enabling reasoning, interoperability and governance at a scale that emergent models can never achieve.